anubis benchmark: measuring proof-of-work overhead in headless chromium

on this page

overview

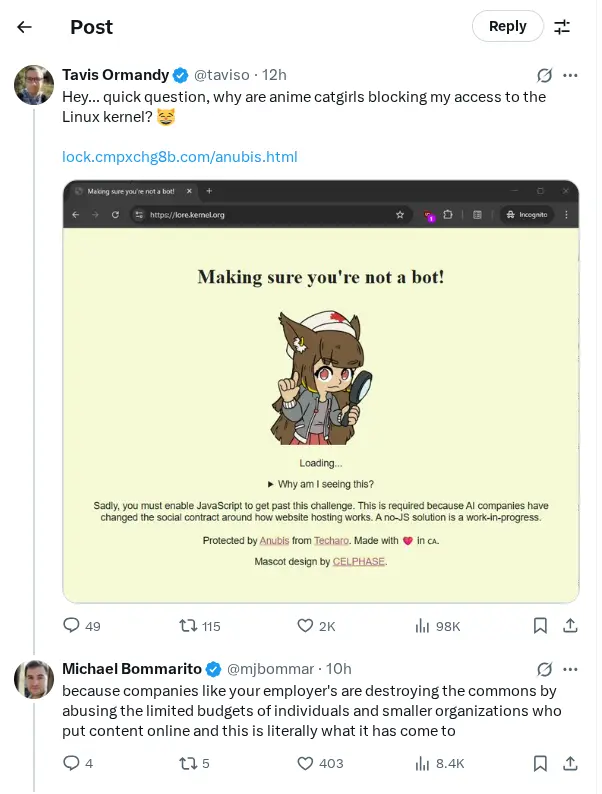

this started with tavis ormandy’s tweet and his analysis showing you could generate tokens for all 11,508 anubis-protected sites in about 6 minutes using raw c code on a free-tier gcp vm. he calculated it would cost less than a cent per month to bypass every anubis deployment.

that’s the theoretical angle. i wanted to measure the practical angle: what does it actually cost the typical vibe-coded scraper using headless chrome on reasonable hardware? turns out: about 1.35 seconds per request at standard difficulty, even if token generation itself is theoretically cheap.

what is anubis?

anubis is a proof-of-work proxy that makes scrapers solve sha256 puzzles before accessing protected sites. it’s deployed on git.kernel.org, gnome’s gitlab, and various other places where people got tired of ai companies helping themselves to everything.

key findings

tested on intel core ultra 7 165h using playwright/chromium in docker:

- challenge execution: 1,349ms average

- hash iterations: 118,243 at difficulty 4

- total request time: 1,535ms (including navigation)

- success rate: 100% (5/5 iterations)

- version tested: anubis 1.20.0, “fast” algorithm

how anubis works

anubis sits between clients and servers as a reverse proxy. when it sees something that looks like a browser (user-agent contains “mozilla”), it serves a proof-of-work challenge page instead of the actual content.

How It Works

- Request Interception: Anubis sits between clients and origin servers as a reverse proxy

- Bot Detection: Evaluates User-Agent for “Mozilla” string and other browser indicators

- Challenge Decision: Uses configurable rules and weight-based scoring to determine action

- Challenge Delivery: Serves a ~10KB HTML page with embedded JavaScript (fast or slow algorithm)

- Proof-of-Work Computation: Client browser performs SHA-256 hashing to find nonce with required leading zeros

- Token Generation: Successful computation generates a signed JWT token with challenge metadata

- Cookie Setting: Sets

techaro.lol-anubis-authcookie valid for one week - Validation & Access: Token signature verified using ed25519 keypair for subsequent requests

humans see a brief loading screen with an anime jackal. scrapers have to actually solve the puzzle, which takes real compute time. the jwt tokens are signed with ed25519, new keypair generated on each restart.

benchmark methodology

test environment

ran a simple benchmark using:

- Container Platform: Docker with Microsoft Playwright image (v1.54.0)

- Browser Engine: Chromium (headless mode)

- Target Site: git.kernel.org Linux kernel repository

- Test Hardware: Intel Core Ultra 7 165H (22 logical CPUs, 30GB RAM)

- Network: Residential fiber (>1 Gbps)

Measurement Protocol

// Benchmark configuration

const TARGET_URL =

'https://git.kernel.org/pub/scm/linux/kernel/git/torvalds/linux.git/tree/?h=v6.17-rc2';

const ITERATIONS = 5;

const TIMEOUT = 60000; // 60 seconds

const DELAY_BETWEEN_ITERATIONS = 5000; // 5 secondsEach iteration measured:

- Navigation time to challenge page

- Challenge parameter extraction

- Proof-of-work execution duration

- Total end-to-end request time

ormandy’s approach vs this benchmark

tavis ormandy tested the computational cost of generating tokens directly - essentially asking “how hard is the crypto puzzle?” his answer: not very. a free gcp vm can generate tokens for every anubis site in 6 minutes.

this benchmark tests something different: “what’s the actual overhead for a scraper?” because real scrapers don’t just generate tokens in c, they:

- run headless browsers (puppeteer, playwright, selenium)

- execute javascript in a sandboxed environment

- handle the full http request/response cycle

- deal with page rendering and dom manipulation

- manage browser process overhead

ormandy proved the math is cheap. this benchmark shows the engineering still costs time. both perspectives matter: his shows anubis won’t stop a determined attacker with custom tooling, mine shows it still works against commodity scrapers using standard tools.

the 1.35-second overhead might be trivial to bypass in theory, but most scrapers aren’t writing custom sha256 solvers. they’re running playwright.goto() and hoping for the best.

overhead considerations

docker adds minimal overhead for cpu-intensive tasks like sha256 hashing - the container abstraction doesn’t meaningfully impact cryptographic operations. playwright in headless mode is also often faster than headed chrome.

so the 1.35-second overhead we measured isn’t inflated by our testing stack - if anything, a real browser with ui would be slower. the time cost is inherent to javascript-based proof-of-work in a browser context, not an artifact of containerization or automation.

the javascript defense dividend

worth noting that modern react/vue/angular spa sites already require javascript to render anything useful, so they get anubis protection basically for free. it’s the old-school html sites that have to make a choice: stay accessible or add friction. on the other hand, they also typically have lower costs to host or simpler options for caching locally or at the edge.

this creates an interesting dynamic: the web’s shift toward javascript-heavy frameworks (usually criticized for accessibility) accidentally built in scraping resistance. anubis just makes it explicit. if your site already requires js to show content, adding a proof-of-work challenge is a marginal change. if you serve static html or run django or drupal, it’s a fundamental shift in accessibility philosophy.

results

timing breakdown

full benchmark data: results.json

| Metric | Min | Average | Max | Median | Std Dev |

|---|---|---|---|---|---|

| Navigation Time | 163ms | 180ms | 225ms | 170ms | 24ms |

| Challenge Execution | 1,337ms | 1,349ms | 1,368ms | 1,346ms | 13ms |

| Total Request Time | 1,506ms | 1,535ms | 1,584ms | 1,525ms | 29ms |

| Hash Iterations | 118,243 | 118,243 | 118,243 | 118,243 | 0 |

challenge configuration

kernel.org uses:

- algorithm: “fast” (multithreaded sha256 with webworkers)

- difficulty: 4 (standard protection)

- challenge id: ea484c87c5b52d8f

anubis has two modes:

- “fast”: multithreaded with webworkers

- “slow”: single-threaded (way slower)

computational impact

at difficulty 4, clients compute ~118,243 sha256 hashes:

- hash rate: ~87,600 hashes/second on my hardware

- power draw: maybe 15-20w additional cpu load

- carbon: ~0.01g co₂ per request (20w _ 1.35s = 0.027wh _ 367g co₂/kwh)

jwt token structure

solving the challenge gets you a jwt:

{

"challenge": "ea484c87c5b52d8f",

"nonce": 118243,

"response": "0000f3a8b2c9...",

"iat": 1755744902,

"nbf": 1755744842,

"exp": 1756349702 // week-long validity

}signed with ed25519, stored as cookie. good for a week.

weight-based thresholds

anubis can assign “weight” (suspicion scores) to requests and respond accordingly:

bots:

- name: generic-browser

user_agent_regex: Mozilla|Opera

action: WEIGH

weight:

adjust: 10thresholds trigger different challenges:

| weight | action | challenge | note |

|---|---|---|---|

| < 0 | allow | none | whitelisted |

| 0-9 | challenge | metarefresh | mild suspicion |

| 10-19 | challenge | fast (diff 4) | moderate |

| 20+ | challenge | fast (diff 8) | paranoid |

lets you scale response to threat level, assuming you configure it right.

economic impact

scraping cost multiplication

scraping 1 million pages:

without anubis:

- ~200ms per page

- ~56 hours total

- ~$2.80 on typical 8gb cloud instance ($0.05/hour)

with anubis (difficulty 4):

- ~1,535ms per page

- ~426 hours (17.75 days)

- ~$21.30 on typical 8gb cloud instance ($0.05/hour)

plus you’ll probably hit rate limits, get your ip banned, etc.

note that serverless options (lambda, cloud functions) are typically priced by cpu-time not wall-clock time and would be significantly more expensive for compute-intensive tasks like this.

difficulty scaling

| difficulty | iterations | time | cost multiple |

|---|---|---|---|

| 1 | ~1k | 100ms | 0.5x |

| 4 | ~118k | ~1.35s | 7.6x |

| 8 | ~1m | ~11s | 55x |

| 10 | ~10m | ~114s | 570x |

effectiveness

works well against

- high-volume scrapers (7-570x cost increase)

- headless browser farms (forces real js execution)

- simple http clients (no js = no access)

- distributed botnets (cost scales linearly)

less effective against

- targeted attacks (if they really want your data)

- state actors (unlimited compute budget)

- legitimate researchers or resources, like the internet archive, which reddit has now blocked

- api access (if there’s another way in)

environmental cost

proof-of-work shifts compute from server to client:

- ~0.01g co₂ per request at difficulty 4

- 1m requests/day = ~10kg co₂

- 365m requests/year = ~3.7 metric tons co₂

whether this matters depends on your perspective about burning cpu cycles to keep bots out.

implementation notes

for site operators

- start with difficulty 2-3, see what happens

- whitelist internet archive and friends

- use weight-based thresholds for graduated response

- watch false positive rates

- consider simpler alternatives for non-critical stuff

for scrapers

- pow challenges mean “go away”

- check robots.txt first

- use apis if they exist

- exponential backoff when you hit anubis

- factor compute costs into your economics

technical resources

benchmark code and data:

conclusion

anubis adds about 1.35 seconds per request at standard difficulty for me on a pretty decent processor. that’s nothing for a human but painful for a scraper trying to grab millions of pages in a hurry.

at difficulty 4, you’re looking at 7.6x cost increase. at difficulty 10, it’s 570x. whether that’s enough depends on how determined your adversary is - although travis already showed that a truly sophisticated party will likely find other ways anyway. (if you’re old enough, you’ll remember the thrill of a hard drive large enough to store rainbow tables.)

of course, this only tested one configuration: headless chrome in docker pretending to be a normal browser. as ormandy showed, if you write custom token generation code, you can bypass this trivially. but most scrapers aren’t doing that - they’re using puppeteer or playwright because that’s what handles javascript, cookies, and all the other web complexity.

the gap between “theoretically cheap” (ormandy’s 6 minutes for all sites) and “practically expensive” (1.35 seconds per request) is where anubis lives. it’s security through making the common path annoying, not through cryptographic hardness.

curl won’t even try. custom c code will laugh at it. but the median scraper using headless chrome? they’re paying the tax.

whether proof-of-work is the future of web defense or just this year’s speed bump remains to be seen. probably the latter.

p.s.: i have another article cooking on the topic of access control and remuneration, including concepts like TollBit and Brave, and this might get an update in the next few weeks as i finish that up.

future work

other things people should try:

- different scraper configurations (not just headless chrome on playwright)

- testing on serverless and known cloud instance types

- how well the weight-based thresholds actually work

- comparison with cloudflare’s challenges

benchmarked august 2025. your mileage may vary.